Agentic Systems + the Myth of Intelligence

We explored agentic AI as a powerful tool, systems that don’t just respond, but act and interact with other systems. What became immediately clear is that this power demands careful design. Questions of security, privacy, and control can’t be added later but need to be embedded directly into the design of the system from the start.

One very practical insight was how much the quality of output depends on the quality of input. The more specific and intentional a prompt is, the more solid and meaningful the response becomes. Large Language Models are not oracles but mirrors of clarity (often the case with me) confusion. In that sense, LLMs seem best suited for certain kinds of work: synthesizing and summarizing complex information, reframing ideas, generating drafts and variations, recognizing patterns, or opening up option spaces. Used this way, they feel less like replacements for thinking and more like amplifiers of it.

A new learning for me were multi-agent systems, where different AI agents, prompted as specific personas, negotiate with one another to reach decisions. This can be incredibly powerful, especially for complex scenarios like healthcare or policy-making, where many perspectives need to be considered quickly.

At the same time, I’m skeptical. These personas are inevitably based on simplified assumptions and stereotypes: the lawyer, the left-wing politician, the economist. Real people don’t think or act in such neat categories. There’s a built-in bias here, a quiet default error that risks reinforcing exactly the kinds of shortcuts we should be questioning and are actually the opposite of what I would consider diverse.

Another important layer is the political economy of AI itself. LLMs are not neutral tools but products that want to retain users, scale usage, and generate value. This makes them fertile ground for echo chambers, subtle persuasion, and dependency, especially when we stop questioning why we are using them in the first place. One concept I found particularly fascinating was Model Context Protocols (MCPs). Systems that allow AI agents to access and interact with external tools. While the possibilities are exciting, they also feel fragile. Once an agent can act beyond its own environment, questions of transparency and control become much harder to answer. Who is responsible when something goes wrong? And can we realistically maintain oversight at that level of complexity? We also discussed system prompts, the invisible instructions that shape an LLM’s behavior long before a user ever interacts with it. They function as a form of priming, quietly influencing limits and values. This layer of pre-configuration reminded me how much power sits before the interface and how little of it is visible.

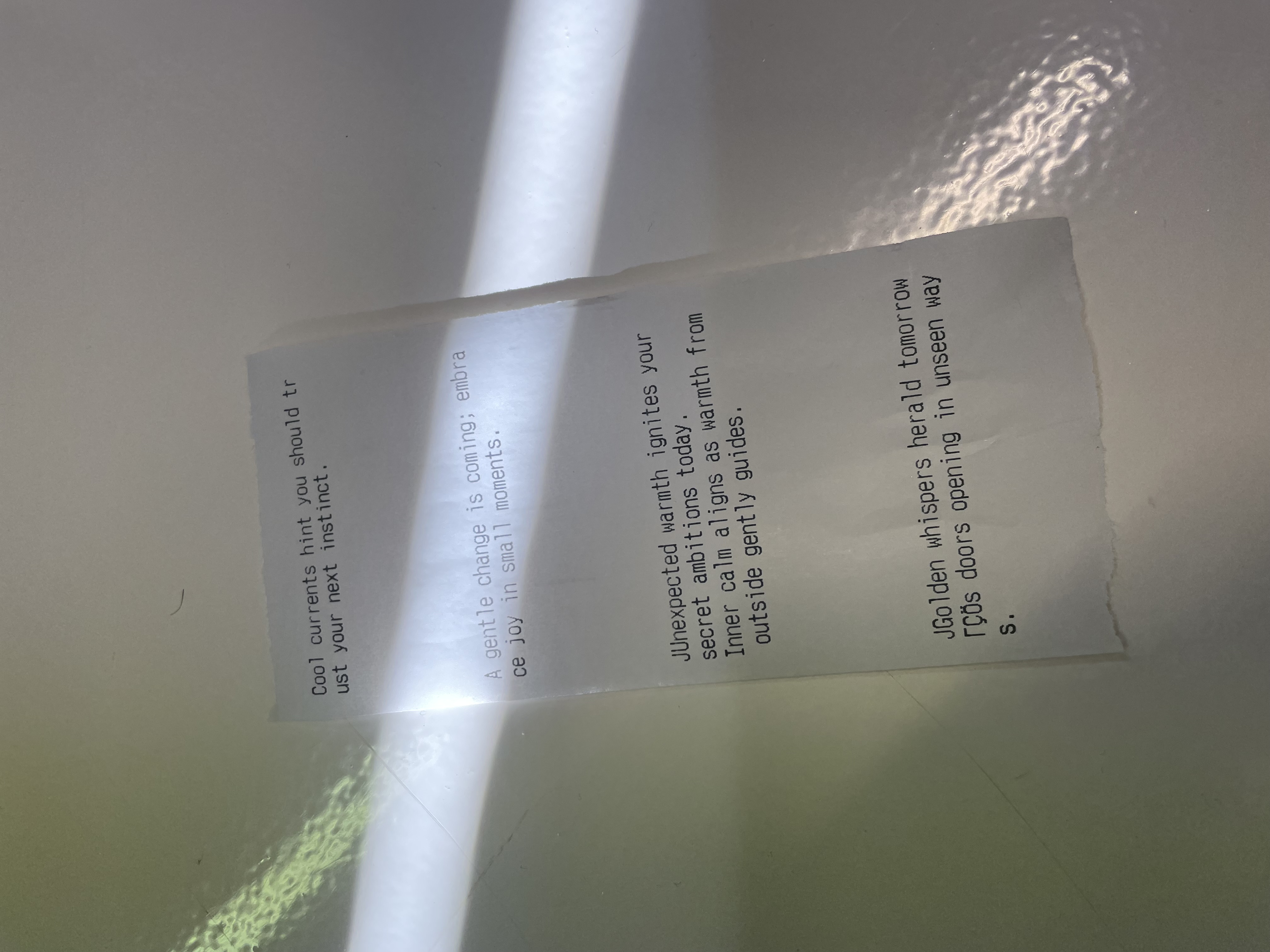

So for the exercise we decided to lean into exactly this, the mysticism that often surrounds AI. We built a fortune teller, a deliberately absurd system where a temperature sensor connected to a Pico Pi fed data to an AI agent. Based on your body temperature, the system would “predict” your future. This way to expose and amplify, how easily we grant authority and meaning to outputs that are, in reality, based on almost nothing. The project wasn’t about accuracy but about belief.

„In AI we trust“

Not every problem needs AI. In fact, I’d argue that most don’t. Perhaps 80% of current use cases are unnecessary. But the remaining 20%, when they are approached with ethical intention, can be genuinely powerful.

Still, I can’t help but wonder: will this all last? Or will AI follow the path of so many technologies before it like blockchain, etc., that were hyped and eventually forgotten?